For many CFOs, the question of whether to invest in AI has already been answered. Money has been approved, initiatives are running, and expectations have been set across the business. The real pressure now is different. It is no longer about experimentation. It is about being able to point to real business impact in a world where capital is constrained and every major investment is under scrutiny.

This is a familiar phase in large organisations. There is plenty of activity. Teams are busy, dashboards are full, and success stories circulate in presentations. Yet when finance looks for clear signals in the numbers, the picture is often less straightforward. Some processes are faster, but costs do not always fall. Some decisions look better supported, but complexity creeps in elsewhere. From a CFO’s point of view, the uncomfortable question becomes simple: is all of this effort actually changing how the business performs, economically and operationally?

At the same time, priorities keep shifting. One year the focus is operating model change, then a major platform investment, then cost reduction, and now, increasingly, AI. Each shift makes sense in isolation. But over time, organisations leave a trail of half-built management approaches, under-used capabilities, and unfinished change. The business stays busy, but it does not always get better at turning big bets into consistent results. This is where the CFO’s role starts to change. It becomes less about approving spend, and more about making sure those investments add up to something durable.

From approving spend to changing how the business runs

Imagine a large organisation investing in AI across its core operations, in areas like claims, lending, or customer service. The ambition is sensible: reduce handling time, improve decision quality, cut rework, and move work through faster. After a few months, the signs look positive. Some cases are processed more quickly. Agents get better summaries. Backlogs shrink in a few places. Leaders can point to pilots and early wins, and the organisation feels like it is moving.

Then the CFO asks a different question: “So what has actually changed in the economics of this operation?” The answers come back in pieces. Cycle time is down in one step, but cost-to-serve is flat because exception handling still bounces between teams. Quality is better for standard cases, but rework has increased elsewhere. The organisation is not failing. It is discovering, in real time, that tools can improve local performance, while end-to-end results only change when the surrounding system changes too.

This is the moment when the CFO’s problem shifts. The question is no longer whether AI work is progressing, but whether the business is actually changing how it runs in a way that can be repeated, governed, and explained in financial terms. This is where AI either becomes a collection of interesting improvements, or a genuine shift in performance.

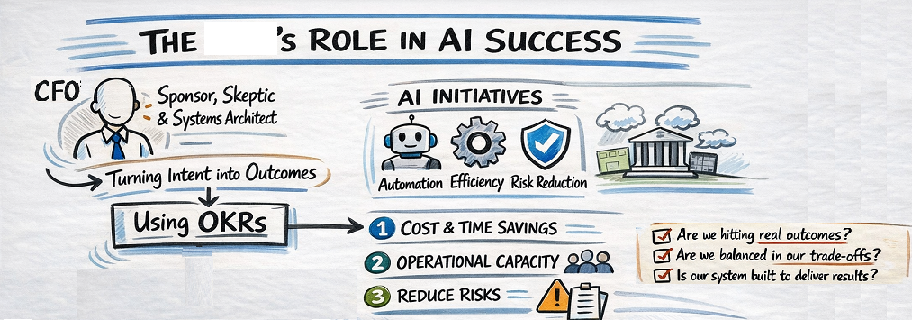

Sponsor, skeptic, and systems architect

Traditionally, the CFO’s role in this kind of change has been that of sponsor. Does the investment fit the strategy? Is the business case credible? Can the organisation afford it, and does it belong in this year’s portfolio? That role still matters. Without it, very little gets started. But sponsorship mainly answers the funding question, not the outcome question.

In practice, most CFOs also play a second role: skeptic. Not skepticism about technology, but about impact. Where exactly is the improvement in cost, margin, capacity, or risk? Which gains will hold at scale, and which are still fragile? This challenge function protects the organisation from confusing impressive activity with economic progress.

A third role is now becoming unavoidable: the CFO as a systems architect for outcomes. This is not about designing models or tools. It is about shaping how goals are set, how priorities turn into work, how trade-offs are made, and how performance is judged across the business. AI will accelerate whatever system it is placed into. If the system is fragmented, acceleration multiplies noise. If the system is coherent, acceleration compounds value.

Where OKRs turn intent into outcomes

This is where OKRs stop being a theoretical management tool and start becoming practical for a CFO. They define, in measurable terms, what the business is trying to change. Only then does it make sense to decide where AI should be applied. Here are three examples from core operations and operational risk.

Example 1: Claims or service operations automation

OKR

- Objective: Reduce the cost and time to resolve standard cases without increasing operational risk.

- Key Results:

- Reduce average handling time from 18 minutes to 12 minutes

- Reduce cost-to-serve per case by 15%

- Keep rework and error rates below 2%

- Maintain no increase in high-severity incidents

AI investment

- Use AI to triage and auto-process low-complexity cases, and to summarise cases for agents.

Operational performance improvement

- Fewer manual handoffs, faster straight-through processing, clearer case summaries for complex work.

Bottom-line impact (CFO view)

- Lower unit cost in operations shows up in operating expense.

- Stable error rates protect the risk profile and remediation costs.

Example 2: Increasing operational capacity without burning out teams

OKR

- Objective: Increase throughput while keeping quality and employee sustainability high.

- Key Results:

- Increase cases handled per FTE by 20%

- Reduce backlog volume by 30%

- Keep exception rework below 2%

- Maintain or improve employee engagement score by X%

- Keep attrition and sickness absence below Y%

AI investment

- Use AI to prioritise work queues, draft responses, and support decision-making in complex cases.

Operational performance improvement

- More work completed per person, fewer delays, less cognitive load from repetitive tasks, more focus on judgement-heavy work.

Bottom-line impact (CFO view)

- Higher output without proportional headcount growth improves operating leverage.

- Better engagement and lower attrition reduce hiring, training, and productivity loss costs.

Example 3: Reducing operational risk and control failures

OKR

- Objective: Reduce operational risk events without slowing down core processes.

- Key Results:

- Reduce high-severity incidents by 25%

- Reduce manual overrides by 30%

- Keep processing times within agreed SLAs

- Reduce remediation and write-off costs by X%

AI investment

- Use AI to flag anomalies, support control checks, and identify risky patterns earlier in the process.

Operational performance improvement

- Fewer late-stage surprises, smoother flow through controls, fewer emergency interventions.

Bottom-line impact (CFO view)

- Lower loss events and remediation spend improve the risk profile and reduce volatility in costs.

Across all three examples, the pattern is the same. The OKRs define what the business is trying to change, in terms both operations and finance can understand. The AI investment is then chosen to move those measures. Operational performance improvements show up in day-to-day work. And the CFO can see the effect in cost, capacity, margin, or risk. This is what it means for OKRs to become the shared language between investment, operations, and financial outcomes.

When AI meets reality: governance and trade-offs

Once you work this way, a different set of questions appears. Not “Can we do this with AI?” but “What trade-offs are we willing to make?” In operational environments, these trade-offs are concrete. For example, pushing more cases through straight-through processing may improve speed and cost, but it may also increase exposure in edge cases. Tightening controls may reduce losses, but it may also slow down throughput and frustrate customers and staff.

With clear OKRs, these are no longer abstract debates. A proposal that improves cycle time but breaks the error-rate or risk key results is not “innovative”; it is simply misaligned with the agreed outcomes. The discussion shifts away from opinions about tools and towards evidence about system-wide impact.

This is why CFOs end up at the centre of these decisions. They are the ones accountable for balancing efficiency, resilience, risk, and sustainability across the whole business, not just inside one function. In an AI-enabled organisation, governance is not about slowing things down. It is about making sure acceleration does not pull the system apart.

The human side of the equation

There is one more dimension that matters, and it is easy to miss if the conversation becomes purely financial. Productivity is not just about output. It is also about whether people can do good work without burning out, whether they spend time on judgement rather than drudgery, and whether the organisation is becoming easier or harder to operate day to day.

That is why the second example deliberately included employee measures alongside throughput and cost. If AI increases volume but drives up attrition, sickness absence, or disengagement, the economics will eventually reverse. A human-centred view is not in conflict with financial discipline. It is part of it. Sustainable performance shows up in both the numbers and the lived experience of the people doing the work.

Bringing it together

AI is not just a technology bet. It is a test of whether the organisation has a management system capable of turning intent into outcomes. OKRs give the CFO a practical way to connect strategy, investment, operational change, and financial results into one coherent story. Priorities will shift and tools will evolve, but this discipline is what allows learning and value to compound rather than reset.

In that sense, the CFO’s role is no longer only to sponsor or to challenge. It is to architect the conditions under which AI, and any other major change, can actually pay off.

A few questions worth reflecting on

- If you look at your current AI initiatives, can you clearly explain which operational measures they are meant to move, and where those show up in the P&L or risk profile?

- Are your OKRs describing real business outcomes, or are they mostly tracking activity and delivery?

- When trade-offs appear between speed, cost, risk, and people sustainability, do you have a shared way of making those decisions explicit?

- And perhaps most importantly: is your organisation getting better, over time, at turning big bets into repeatable results, or does each new wave of investment still feel like a reset?

Leave a Reply